Migrating to Jetpack Compose: How AI Accelerated Our Journey at Caper

Author: Matt Kranzler

Introduction

At Instacart, our Caper smart carts bring together AI, computer vision, and real-time data to power the future of in-store shopping. Customers scan items as they shop and pay directly on the cart. The Android app powering each cart handles barcode scanning to payment processing, making stability critical in live retail environments.

Like many Android teams, we built our app using Fragments and XML layouts. By 2024, the ecosystem had moved to Compose-first, and staying on Fragments meant accumulating technical debt. Migrating hundreds of Fragment-based screens to Jetpack Compose is a significant undertaking, especially when a crash can lead to cart abandonment.

We initially scoped the migration as a multi-quarter effort. With AI coding assistants, our refactoring became significantly faster than anticipated.

This post outlines our four-phase migration strategy and how we used AI to transform tedious refactoring into a major accelerator.

The Four-Phase Migration Strategy

When we finally decided to adopt Compose, we started with a hybrid approach using Fragments as wrappers around Compose UI:

class ShoppingCartFragment : BaseComposeFragment() {

@Inject lateinit var viewModel: ShoppingCartViewModel

@Composable

override fun Content() {

val viewState by viewModel.state.collectAsStateWithLifecycle()

ShoppingCartView(viewState)

}

}Our goal was clear: get off Fragments entirely and build a fully Compose app. Rather than waiting for a “big bang” migration, we designed a four-phase approach that would let us make progress incrementally while keeping the app stable in production.

Phase 1: Implicit Fragment Hosts

Goal: Remove explicit Fragment classes from Compose-based features while maintaining Fragment-based navigation.

Key Insight: Google released navigation-fragment-compose, a library that creates implicit Fragment hosts for Composables. This allowed us to write pure Compose screens without manually creating Fragment wrappers.

We established a new pattern for Compose features:

// MyFeatureScreen.kt

@Composable

fun MyFeatureScreen() {

// Required parameterless function for fragment-based navigation

val bindings = provideMyFeatureBindings()

val viewModel = provideMyFeatureViewModel()

val fragment = LocalFragment.current

val navController = fragment.findNavController()

MyFeatureScreenInternal(

viewModel = viewModel,

scaffold = bindings.scaffold(),

navigateBack = { navController.popBackStack() },

navigateToDetails = { id -> navController.navigate(R.id.details_fragment) }

)

}

@Composable

internal fun MyFeatureScreenInternal(

viewModel: MyFeatureViewModel,

scaffold: ScaffoldProvider,

navigateBack: () -> Unit,

navigateToDetails: (String) -> Unit,

) {

val state by viewModel.state.collectAsStateWithLifecycle()

MyFeatureView(state = state, scaffold)

LaunchedEffect(viewModel) {

// handle effects & invoke navigation callbacks

}

}The split between the parameterless MyFeatureScreen() and internal MyFeatureScreenInternal() was intentional. The outer function handles navigation and dependencies (tied to Fragment world), while the inner function is pure Compose with callbacks, preparing us for eventual Compose Navigation.

AI Acceleration in Phase 1

We completed this phase manually without AI assistance. We adopted this pattern for new features while gradually migrating existing ones. This manual work was essential for understanding the migration patterns, edge cases, and conventions that would later inform our AI-assisted approach in subsequent phases.

Phase 2: Type-Safe Navigation

Goal: Migrate from XML nav graphs and resource IDs to Kotlin DSL with type-safe routing.

Key Insight: Compose Navigation doesn’t support resource IDs. Rather than migrate directly to Compose Navigation (which would be a massive lift), we could migrate to the Fragment-based Kotlin DSL graph first. This gave us type-safe routing while keeping Fragment navigation, making the eventual move to Compose Navigation much simpler.

For each feature, we needed to:

1. Define type-safe route objects:

// feature/api/CouponDetailsRoutes.kt

@Serializable

data class CouponDetailsDialogRoute(

val couponId: String,

val eligibleItemsCollapsed: Boolean = false,

val showClipAnimations: Boolean = false,

val featuredItemImageUrl: String? = null,

) : DialogRoute2. Create NavGraphBuilder extensions:

// feature/impl/CouponDetailsNavigation.kt

fun NavGraphBuilder.couponDetailsRoutes() {

composableDialogFragment<CouponDetailsDialogRoute>(

fullyQualifiedName = "com.instacart.cart.feature.coupondetails.impl.ui.CouponDetailsDialogKt\$CouponDetailsDialog"

)

}3. Build the Kotlin DSL nav graph:

// Before: XML-based

val xmlGraph = navController.navInflater.inflate(R.navigation.nav_graph)

navController.setGraph(xmlGraph)

// After: Kotlin DSL

navController.graph = navController.createGraph(

startDestination = InitializationNavGraphRoute

) {

initializationRoutes()

onboardingRoutes()

shoppingCartRoutes()

productDetailsRoutes()

checkoutRoutes()

couponDetailsRoutes()

// ... all other routes

}

4. Support gradual migration by combining old and new:4. Support gradual migration by combining old and new:

val xmlGraph = navController.navInflater.inflate(R.navigation.nav_graph)

navController.graph = navController.createGraph(

startDestination = startDestination

) {

// New type-safe routes

onboardingRoutes()

couponDetailsRoutes()

}.also { navGraph ->

// Still include XML graph for unmigrated features

navGraph.addAll(xmlNavGraph)

}

This allowed us to migrate features one by one without breaking anything. Navigation calls could use either approach during the transition:

// Old style (still worked during migration) navController.navigate(R.id.checkout_fragment) // New style (type-safe) navController.navigate(CheckoutScreenRoute)

AI Acceleration in Phase 2

We started Phase 2 in late 2024 by manually migrating navigation graphs. The work was methodical but slow, with each sub-graph migration taking anywhere from a couple hours to much longer depending on complexity. We had 30+ sub-navigation graphs and 130+ destinations to migrate.

By early 2025, we began experimenting with AI-assisted migrations. The capabilities of large language models had improved significantly, representing a major leap in code understanding and refactoring accuracy. We developed an iterative workflow:

- Learn by Doing: Complete a few migrations manually to understand patterns and edge cases

- Use Git History as Context: Provide the AI with commit diffs showing complete transformations

- Correct and Refine: Watch changes in real-time and immediately correct anything that doesn’t match conventions

- Update the Migration Guide: Capture learnings in markdown (naming conventions, edge cases, common mistakes)

- Repeat with Improved Context: Each iteration used updated guides and examples from previous successes

This interactive correction was crucial. As models improved, tasks that once required manual fixes and smaller chunks became single-session successes with cross-file awareness and consistent style.

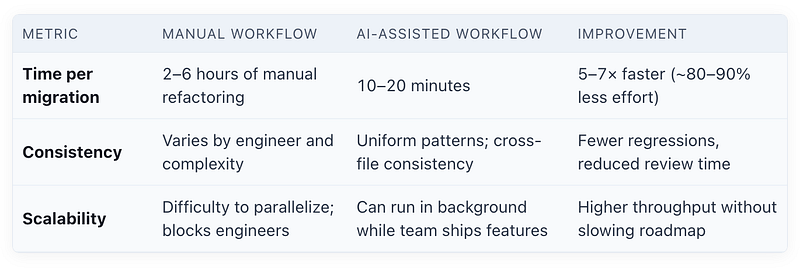

The impact was substantial:

Notes:

- AI reduced variance across migrations, improving quality and predictability.

- Parallelization amplified speed gains: migrations continued while feature development stayed unblocked.

- Migration time still varied by graph complexity, but the AI workflow dramatically reduced the long tail.

- Improvements compounded as the migration guide improved over time.

Phase 3: Fragment to Compose Migration

Goal: Migrate all Fragment-based features to pure Compose screens following our established patterns.

Key Insight: With type-safe routes in place and the fragment-less pattern proven in Phase 1, we can now systematically migrate legacy Fragments to pure Compose.

At the start of Phase 3, we had:

- Type-safe routing with Kotlin DSL nav graph

- Fragment-less pattern for new Compose features

- 100+ features still implemented as Fragments (mix of XML views and Compose in Fragments)

Each feature needs to be converted to follow our Phase 1 pattern: a <Feature>Screen.kt or <Feature>Dialog.kt file with the parameterless and internal Composable functions. This represents the heaviest lift in both technical complexity and developer time.

AI Acceleration in Phase 3

For Phase 3, we evolved our approach even further by creating a comprehensive AI command that serves as both a migration checklist and AI instruction guide. This 17-step workflow breaks down the migration into four main stages:

1. Analysis and Baselining

- Identify the fragment type (Fragment vs DialogFragment)

- Analyze the XML layout and implementation

- Create a baseline screenshot test using Paparazzi to capture the current UI

2. Compose Implementation

- Create the new Compose View with identical UI

- Build the Screen/Dialog structure following Phase 1 patterns

- Set up dependency injection

- Add Compose previews for development

3. Verification and Integration

- Run automated visual parity check between baseline and new Compose screenshots

- Update the navigation graph to use the new Compose route

- Run all tests to ensure no regressions

4. Cleanup

- Remove the old Fragment code

- Final verification

As we completed migrations using this workflow, we continued refining the guide based on learnings. Eventually, we formalized this migration guide into an AI skill, a reusable module that packages the entire 17-step workflow with context and conventions. Skills enable progressive disclosure of information, allowing the AI to access exactly what it needs at each step without overwhelming the context window. This evolution from markdown guide to structured skill dramatically improved migration effectiveness and consistency.

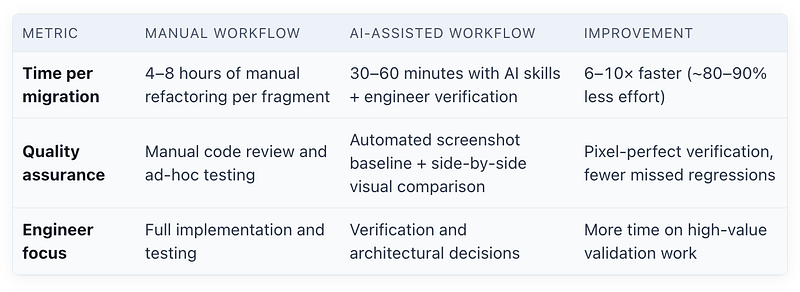

The impact so far:

Notes:

- Phase 3 uses AI skills with engineer verification checkpoints, unlike Phase 2’s fully automated approach. We generate Paparazzi screenshots of the original Fragment view, which inform the AI in building the Compose version. Engineers then compare screenshots of both versions to verify visual parity, ensuring pixel-perfect matches.

- Progressive disclosure of context allowed AI to handle complex, multi-file migrations consistently.

- Migration time varies by fragment complexity, but the AI workflow dramatically reduces the long tail.

Principles for AI-Assisted Refactoring

Our migration from Fragments to Compose, accelerated by AI tooling, surfaced several core principles about how large-scale modernization projects are evolving. Success depends not just on adopting new tools, but on integrating them with strong engineering practices and a pragmatic approach to risk management.

1. The Economics of Technical Debt Have Changed

The emergence of powerful AI coding assistants has fundamentally altered the cost-benefit analysis of addressing technical debt.

AI excels at repetitive, mechanical refactoring. The 5–7x speed increase we observed in Phase 2 saved an estimated 300–350 engineering hours and made migrations feasible that were previously too tedious to justify.

This shifts the engineering role. Previously, the engineer’s time was spent executing the changes. Now, the engineer’s primary responsibility moves from execution to definition and validation:

- Architecture and Planning: Defining the migration phases and the desired end-state architecture

- Pattern Definition: Establishing the explicit conventions the AI must follow (see Principle 2)

- Validation and Oversight: Reviewing the AI-generated code for correctness, performance, and subtle runtime errors that the AI might miss

Large-scale refactoring, once a months-long grind that slowed feature development, is becoming far more manageable.

2. Treat AI Instructions as Code

We achieved the most consistency and highest accuracy when we stopped writing documentation for humans and started writing instructions specifically for AI consumption. Our 325+ line migration guide is effectively a program that the AI executes.

Documentation written for AI agents must be far more explicit. It forces you to articulate conventions, edge cases, and decision points that human developers may or may not intuit. This documentation serves triple duty: AI agents execute it autonomously, human developers use it as a checklist, and code reviewers verify that the steps were followed.

Like code, these guides require investment and must be iterated upon. After 5–6 migrations, we refined the guide until the process became highly predictable. The evolution from markdown guides to structured AI skills represents the formalization of this principle.

3. Incrementalism Mitigates AI Risk

Our four-phase strategy was essential for managing risk, especially in a critical hardware environment like ours. By decoupling the navigation refactor (Phase 2) from the UI refactor (Phase 3), we were able to ship small, AI-generated changes incrementally. Each phase had clear success criteria and could be validated in production before moving forward, reducing the blast radius of any potential errors.

4. Invest in the Workflow, Not Just the Tool

The AI tooling landscape moves incredibly fast. The specific tool matters less than the workflow surrounding it. Our iterative feedback loop unlocked velocity: learn by doing, provide context through git history, correct in real-time, update the guides, and repeat.

During our migration, new capabilities like AI skills emerged that dramatically improved our effectiveness. However, we had to adapt our workflow to capture the full benefit. Teams that simply adopt new tools without evolving their processes will miss most of the gains.

Realizing these productivity gains requires a human shift. Effectively collaborating with AI requires new skills: prompt engineering, creating effective migration guides and skills, and developing intuition for when to trust the AI versus when to intervene. Share your learnings with your team and demonstrate the impact.

What’s Next

Phase 4: Compose Navigation

With Phase 3 well underway, we’ve already begun work on the final step: migrating from Fragment-based navigation to Compose-based navigation. We’re running Phase 4 in parallel with completing Phase 3, using feature flags to support both navigation systems during the transition.

Because we completed the previous phases, Phase 4 is significantly simpler. The type-safe routes are in place, screens are migrating to pure Compose, and we’ve established proven migration patterns. Early experiments with AI skills for this phase have shown promising results, and we expect this final phase to proceed even faster than Phase 3.

Scaling AI-Assisted Refactoring Across Instacart

The learnings from this migration extend far beyond our Caper team. We’ve shared our approach through internal tech talks and documentation, and other engineering teams across Instacart are now using AI skills to tackle their own large-scale refactoring challenges.

Teams have adopted the same patterns we developed: treating AI instructions as code, building structured skills for repetitive migrations, and establishing iterative workflows with human oversight. Engineers across the company are applying these techniques to address technical debt that had been deprioritized for years.

The fundamental shift is this: the economics of technical debt have changed. Projects that previously had poor ROI due to high manual labor costs are now feasible. With significant efficiency gains and the ability to run migrations in parallel with feature development, teams can maintain velocity on their roadmap while systematically paying down technical debt.

This isn’t just about our Fragment-to-Compose migration. It’s about establishing a repeatable playbook for how engineering teams at Instacart can leverage AI to modernize our codebase at a pace that was unimaginable just a year ago.

Conclusion

At Instacart, our Caper smart carts are a key part of our Connected Stores technology, helping grocery retailers move faster and innovate with confidence. Delivering a best-in-class product requires disciplined refactors like our Compose migration. Investing in AI is core to our engineering mission, and we use it throughout our internal work to move quickly and deliver value. Our partners count on our AI expertise to unlock results faster, and we remain focused on advancing the in-store experience.

Instacart

Author

Instacart is the leading grocery technology company in North America, partnering with more than 1,800 national, regional, and local retail banners to deliver from more than 100,000 stores across more than 15,000 cities in North America. To read more Instacart posts, you can browse the company blog or search by keyword using the search bar at the top of the page.